Introduction

WebAssembly (Wasm) was initially designed as a binary instruction format for executing native code efficiently within web browsers. The original use cases are focused on augmenting Javascript in the browser to run native code in a fast, portable, and secure way for games, 3D graphics, etc.

However, its potential extends far beyond the browser. In this blog post, we’ll delve into the exciting realm of running Wasm outside the browser, exploring its advantages, and relevant specifications.

Until recently, I ignored Wasm, as it didn’t seem to have much relevance for me as a backend / cloud developer. However, I started getting curious when I read about Wasm running outside the browser with a new project called WASI and the Docker founder declared Wasm on the server as the future of computing.

If WASM+WASI existed in 2008, we wouldn't have needed to created Docker. That's how important it is. Webassembly on the server is the future of computing. A standardized system interface was the missing link. Let's hope WASI is up to the task! https://t.co/wnXQg4kwa4

— Solomon Hykes (@solomonstre) March 27, 2019

My curiosity peaked when one of the key findings of CNCF 2022 Annual Survey declared Containers are the new normal and Wasm as the future.

If Wasm on the server is indeed the future of computing, we owe it to ourselves to learn more about it now.

Wasm outside the browser with WASI

WASI aims to enable the execution of Wasm modules outside the browser environment, presenting an alternative to running applications in virtual machines or containers. Leveraging Wasm with WASI offers several notable benefits over containers:

- Faster Execution: Wasm applications exhibit significantly faster startup times (~10s of ms) compared to serverless functions or containerized solutions (~100s of ms), practically eliminating cold-start delays.

- Reduced Footprint: Wasm modules built using languages like Rust result in much smaller binaries (~2-3x smaller) compared to equivalent OCI container implementations.

- Enhanced Security: Wasm apps operate within a deny-by-default sandbox, ensuring an extra layer of security compared to containers that follow an allow-by-default model.

- Portability: Unlike containers, which are platform-specific, Wasm modules can seamlessly run on any host system, across different architectures and operating systems.

Portability

Let’s take a moment to look into the portability of Wasm, as it’s kind of a big deal.

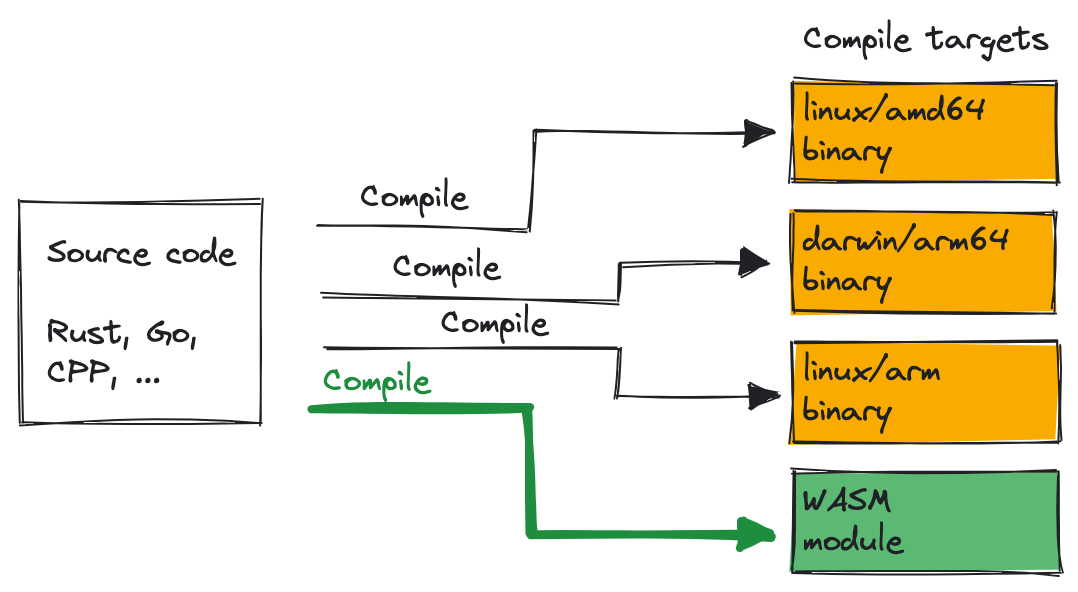

Normally, you’d compile your source code for a specific target such as linux/amd64 or linux/arm. In Wasm, there’s a single compile target: A Wasm module.

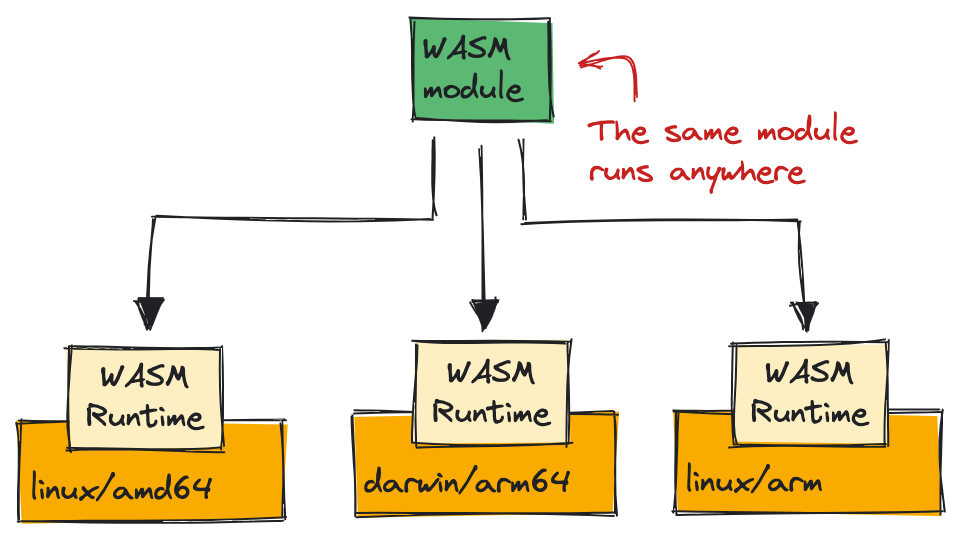

Once you have the WASM module, the same module runs anywhere within a WASM runtime:

This level of portability is unmatched. Java’s vision of “Run once, run anywhere” is finally being realized with Wasm for any language that can compile to Wasm+Wasi.

Wasm runtimes

The key to the portability story is the Wasm runtimes. To execute Wasm bytecode, a Wasm runtime is required. Several Wasm runtimes exist (see awesome-wasm-runtimes) with different focus.

Some popular Wasm runtimes that support WASI are:

wasmtime: Developed by the Bytecode Alliance, designed for server and cloud environments.wasmedge: Supported by CNCF, primarily focuses on edge devices, catering to the requirements of resource-constrained environments.wasmer: Another widely adopted Wasm runtime.

WASI: The System Interface for Wasm

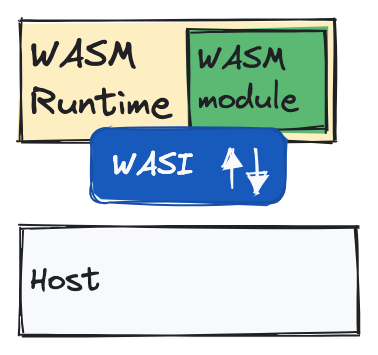

To enable Wasm code to interact with the underlying system, a system interface is required. This is precisely what the WebAssembly System Interface (WASI) provides. WASI defines a standardized set of interfaces and functionalities that Wasm runtimes implement.

WASI provides a subset of POSIX-inspired functionalities in its Preview1

version. It includes features such as file read/write operations within a

sandboxed environment, program arguments, environment variables, hardware clock

access, random number generation, and limited socket support.

However, it’s important to note that WASI is still in the proposal stage, and certain capabilities, like full socket support, are not yet available. This severely limits the type of applications you can currently build with Wasm+Wasi.

To enhance WASI’s capabilities, Some Wasm runtimes, like wasmedge, have

implemented their own socket

extensions

in languages like Rust, JavaScript, and C. Additionally, projects like

WAGI enable the use of HTTP handlers

alongside WASI, while WASIX aims to add complete POSIX

support but currently limited to the wasmer runtime. It’s also worth

mentioning that garbage collection support for Wasm, which is a prerequisite to

support languages like Java, is also still under discussion with a proposal

available on the official WebAssembly GitHub

repository.

As you can see, the Wasm ecosystem around WASI is quite dynamic. We’ll see how WASI and projects around it evolve in the coming months.

Use cases

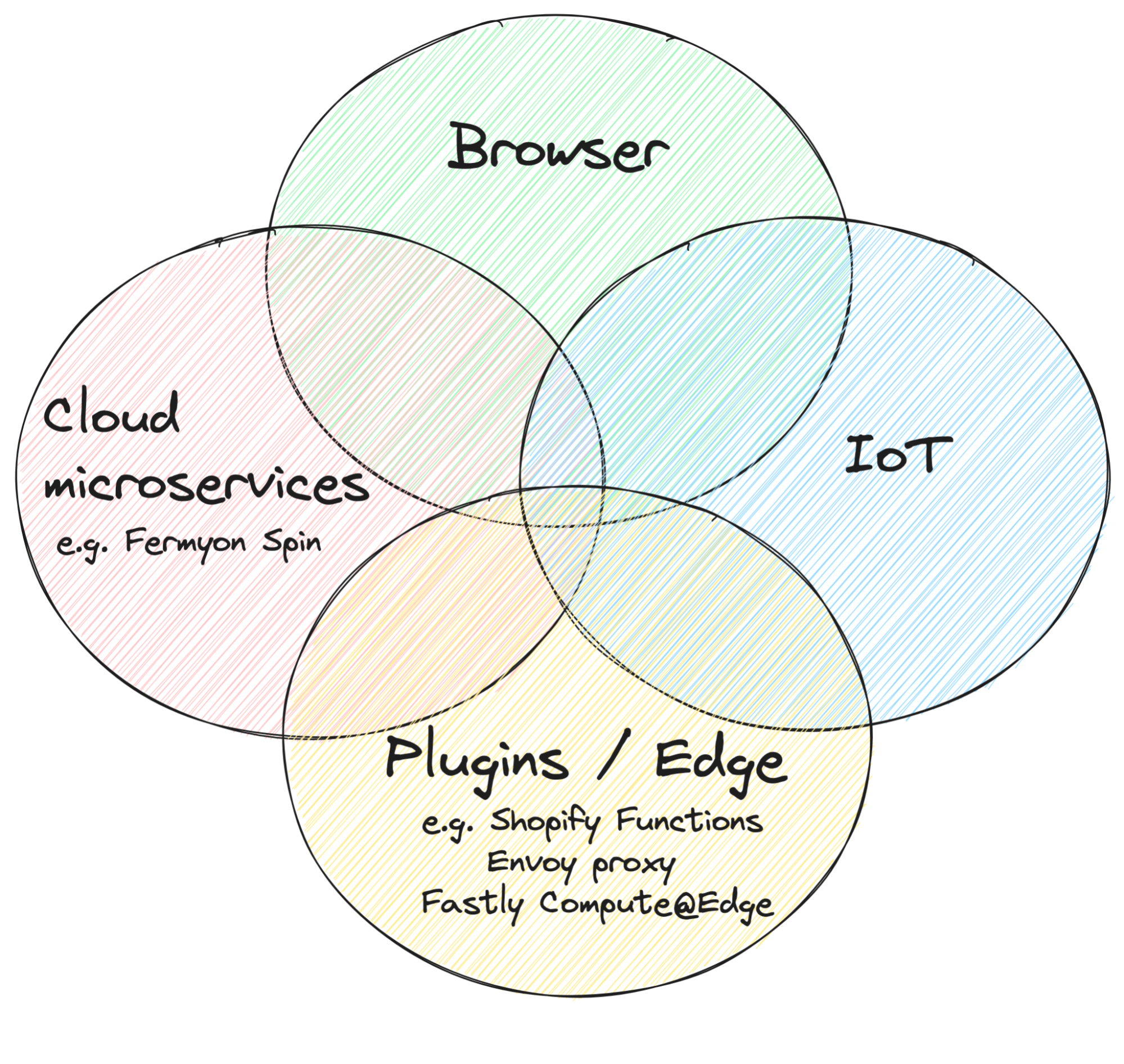

At this point, you might be wondering: This is all cool but in what kind of use cases should I be thinking about Wasm? There’s an excellent blog post from Matt Butcher where he explains the four areas where Wasm is great for.

Running native code in the browser is a well-established and mature use case for Wasm.

More recent but quite established part of Wasm is running custom code as plugins, typically at the edge. For example, in Shopify Functions, you can extend the checkout process with custom code deployed as a Wasm module or you can extend Envoy Proxy with Wasm modules written in any language with Wasm support.

Another natural and emerging field for Wasm is IoT. WebAssembly Micro Runtime (WAMR) is a lightweight standalone WebAssembly (WASM) runtime with a small footprint perfect for embedded and IoT devices.

The area I’m the most excited about is the cloud microservices. So far, we’ve been using containers and serverless functions to run our microservices in the cloud but I can see Wasm modules being the defacto standard to run microservices in a faster, more efficient, secure way. Frameworks like Spin show a lot of potential in this area.

With faster execution, reduced footprint, enhanced security, and unmatched portability, Wasm with WASI is poised to revolutionize areas such as edge computing, IoT, and cloud microservices, offering developers exciting new possibilities.

In the next blog post, we’ll dive into WASI to see what it takes to run a Wasm+Wasi app in a Wasm runtime. If you have questions or feedback, feel free to reach out to me on Twitter @meteatamel.