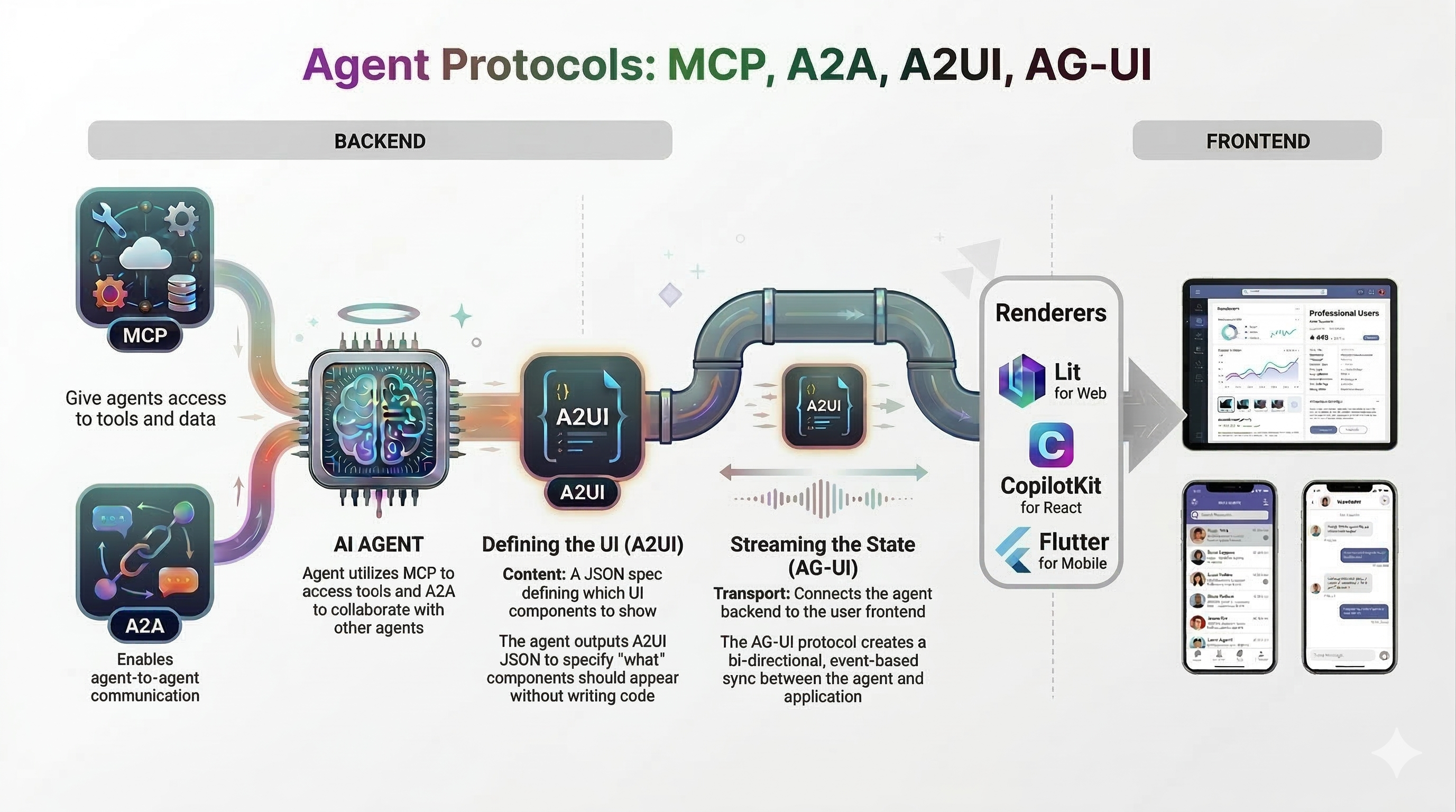

In my Agent Protocols - MCP, A2A, A2UI, AG-UI post, I provided a high-level overview of some of the main agent protocols: MCP, A2A, A2UI, and AG-UI. In the Agent-User Interaction Protocol (AG-UI) with Agent Development Kit (ADK) post, I zoomed into the AG-UI protocol and showed how it can be used with Google’s Agent Development Kit (ADK).

In today’s post, I’ll zoom into the A2UI protocol and show how it can be used with Google’s Agent Development Kit (ADK).

Read More →